Fill in the BLANC: Human-free Quality Estimation of Document Summaries

Abstract

BLANC: automatic estimation of document summary quality. ROUGE require human-written reference summaries, BLANC allowing for fully human-free summary quality estimation.

- objective 客观

- reproducible 可复现

- fully automate 全自动

1. Introduction

Estimation methods:

ROUGE family of methods:

- Advantages:

- well-defined

- reproducible

- Disadvantages:

- require a human-written reference summary or summaries for comparison, completely disregarding the original document text.

- ROUGE method is limited to measuring a mechanical overlap of text tokens with little regard to semantics.

- Advantages:

Human evaluation:

- Advantages:

- far more meaningful than ROUG

- far more powerful than ROUG

- Disadvantages:

- far less reproducible

- Advantages:

Train a model on document summaries annotated with human quality scores

- Advantages:

- evaluate summaries without further human involvement

- achieve high agreement with human labelers

- objective and reproducible

- Disadvantages:

- not generalize 针对特定任务需要针对性训练

- Advantages:

Estimate how “helpful” a summary is for the task of understanding a text

- Disadvantages:

- one must choose from a vast set of questions one might ask of a text, presupposing knowledge of the document itself and seriously limiting its reproducibility.

- Disadvantages:

2. Method

2.1 Introducing BLANC

Consider these ingredients for an ideal summary quality estimator:

- It should measure the functional qualities of a summary as directly as possible.

- It should reliably estimate quality across a broad range of document domains and styles. (Model should be well-documented, well-understood, widely used, and open source.)

The definition of BLANC: a measure of how well a summary helps an independent pre-trained language model while it performs its language understanding task on a document. (focusing on the masked token task, also known as the Cloze task(完形填空任务))

BLANC scores should strongly correlate with the summary quality scores of human judges, even when split into multiple quality dimensions. These are the key intuitions:

A more informative summary should help a language model with tasks such as sentence entailment prediction and masked token reconstruction.(一个信息丰富的摘要应该有助于语言模型完成句子蕴涵预测和掩蔽标记重构等任务。)

A less factually correct summary should be less helpful to a language model performing inference on the document’s text.(不太符合事实的摘要对用于文本推断的语言模型帮助不大。)

A more fluent summary should be better understood by a language model because it better matches the distribution of text on which it was pre-trained.(语言模型应该能够更好地理解更流畅的摘要,因为它能够更好地匹配预先训练过的文本的分布)

We explore two versions of BLANC: BLANC-help and a BLANC-tune.The essential difference between them:

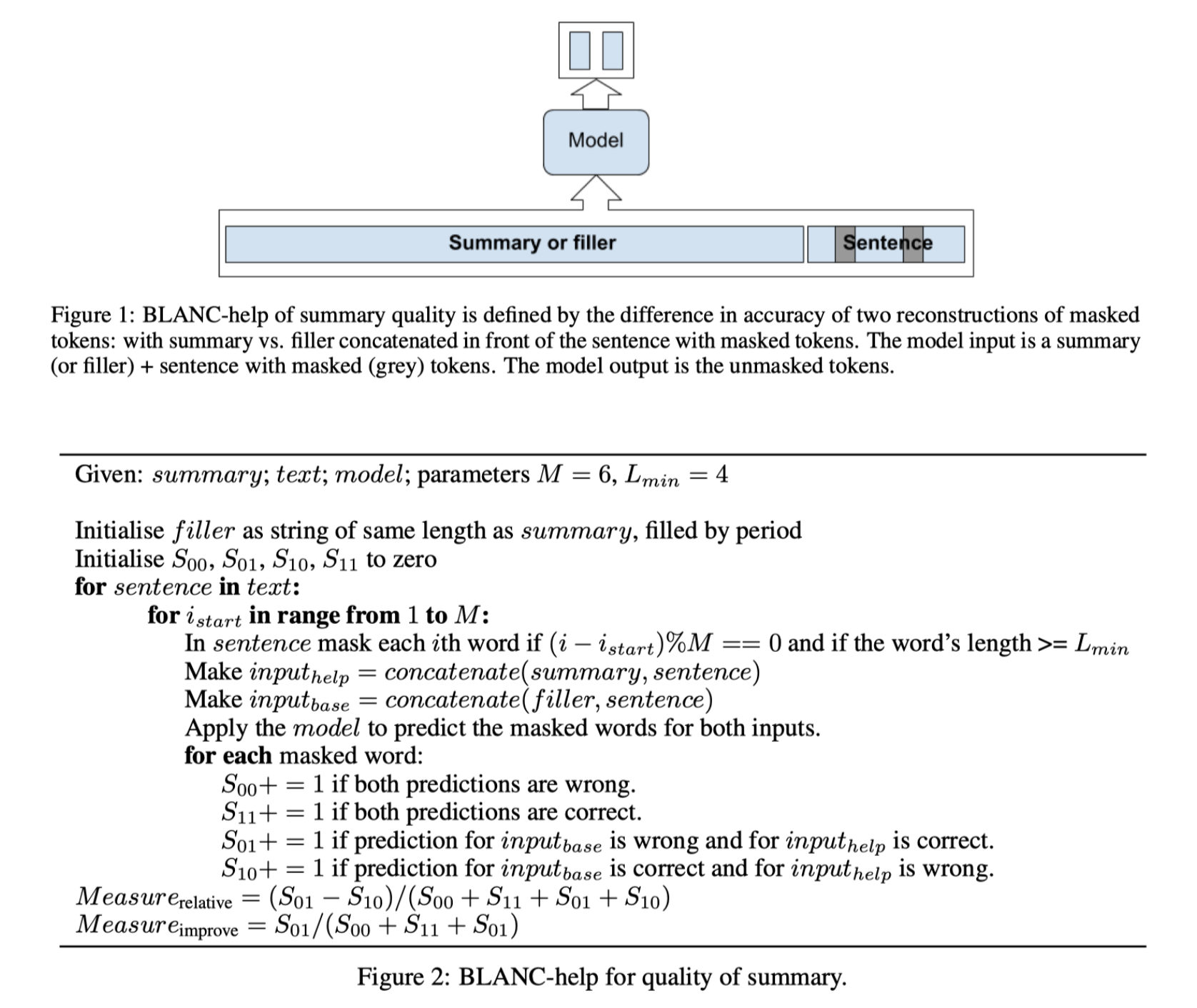

BLANC-help uses the summary text by directly concatenating it to each document sentence during inference.

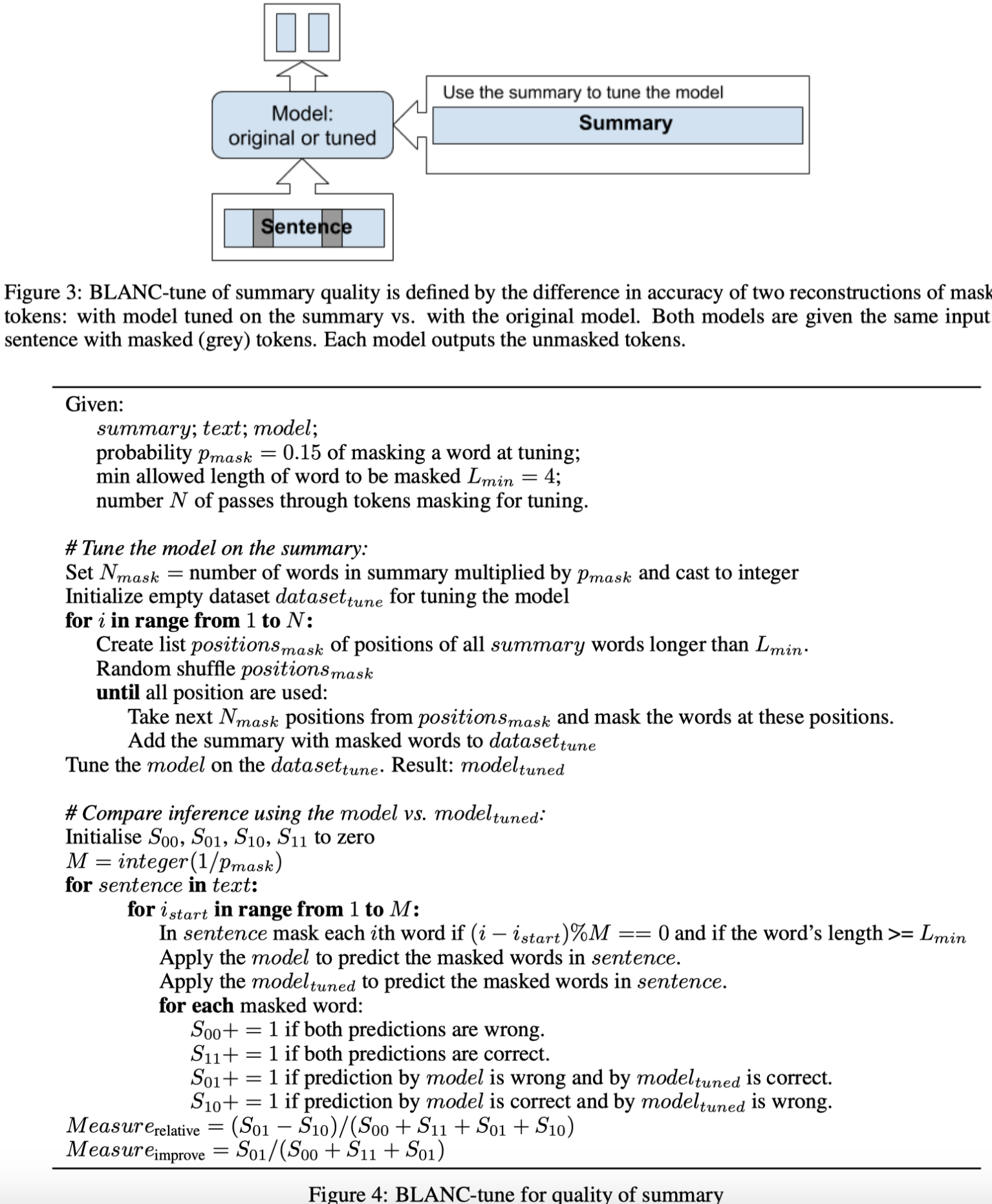

BLANC-tune uses the summary text to fine-tune the language model, and then processes the entire document.

Thus with BLANC-help, the language model refers to the summary each time it attempts to understand a part of the document text. While with BLANC-tune, the model learns from the summary first, and then uses its gained skill to help it understand the entire document.(在blanco -help中,每当试图理解文档文本的一部分时,语言模型都会引用摘要。使用blanco -tune时,模型首先从摘要中学习,然后使用获得的技能帮助它理解整个文档。)

2.2 BLANC-help

There are many possible choices for how to mask the tokens. Our aim is to mask approximately 15% of tokens in a sentence, and evenly cover all tokens.The unmasking is done twice for each sentence of the text and for each allowed choice of masked tokens in the sentence. First, the unmasking is done for input composed of the summary concatenated with the sentence. Second, the unmasking is done for input composed of a “filler” concatenated with the sentence. The filler has exactly the same lengths as the summary, but each summary token is replaced by a period symbol (“.”).

2.3 BLANC-tune

As in the case of BLANC-help, we define BLANC-tune by comparing the accuracy of two reconstructions: one that does use the summary, and another that does not. In the case of BLANC-help, this was the difference between placing the summary vs. placing the filler in front of a sentence. Now, in the case of BLANC-tune, we compare the performance of a model fine-tuned on the summary text vs. a model that has never seen the summary.

2.4 Extractive summaries: no-copy-pair guard

In the case of purely extractive summaries, the process of calculating BLANC scores may pair a summary with sentences from the text that have been copied into the summary. This exact sentence copying should be unfairly helpful in unmasking words in the original sentence.

We may exclude any pairing of exact copy sentences from the calculation of the measure.

In the process of iterating over text sentences, whenever a sentence contains its exact copy in the summary, it is skipped.

In the process of iterating over text sentences, whenever a sentence contains its exact copy in the summary, the copy of the sentence is removed from the summary (only for this specific step in the process).

3. Comparison with human evaluation scores

The BLANC measures do not require any “gold-labeled” data: No human-written summaries nor human-annotated quality scores are needed. Theoretically, the measures should reflect how fluent, informative, and factually correct a summary is, simply because only fluent, informative, correct summaries are helpful to the underlying language model.

- 本文作者: 鱼咸滚酱

- 本文链接: https://github.com/WangMeng2018/WangMeng2018.github.io/tree/master/2020/03/01/Report-Fill-in-the-BLANC-Human-free-Quality-Estimation-of-Document-Summaries/

- 版权声明: 本博客所有文章除特别声明外,均采用 Apache License 2.0 许可协议。转载请注明出处!