Attend to the beginning: A study on using bidirectional attention for extractive summarization

Abstract

This work proposes attending to the beginning of a document, to improve the performance of extractive summarization models when applied to forum discussion data.

1. Introduction

Text summarization has been applied to different natural language domains; news, academic papers, emails, meeting notes, forum discussions, etc..(新闻、学术文章、邮件、会议记录、论坛交流等等,除了新闻,其他领域的摘要生成都不是成熟。)

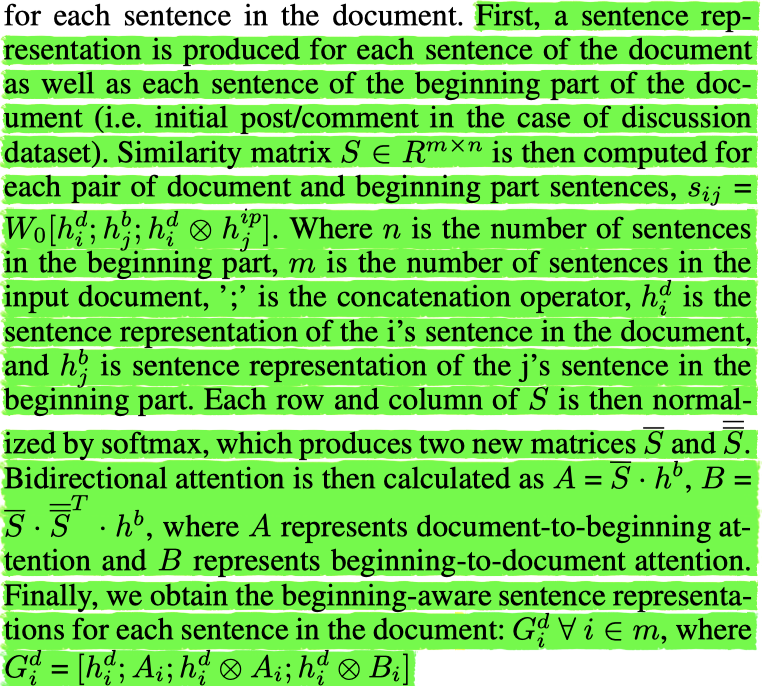

This work proposes integrating bidirectional attention mechanism in extractive summarization models, to help to attend to early pieces of text (initial comment). The main objective is to benefit from the dependency between the initial comment and the following comments and try to distinguish between important, and irrelevant or superficial replies.

Contributions in this work are threefold:

- First, we introduce integrating bidirectional attention mechanism into extractive summarization models, to help to attend to earlier pieces of text.

- Second, we achieved a new SOTA on the forum discussion dataset through the proposed attending to the beginning mechanism.

- Third, to further verify the transferability of our hypothesis (i.e. attending to the beginning), we perform evaluations to show that attending to earlier sentence in a more generic text, can also benefit summarization models on different domains other than discussions.

2. Dataset

This work employs two extractive summarization datasets.

- The discussion dataset proposed by (Tarnpradab, Liu, and Hua 2017, https://www.dropbox.com/s/heevii01b1l6s0a/threadDataSet.zip?dl=0) . The discussion dataset is extracted from trip advisor forum discussions.

- Microsoft Word(MSW) dataset(未公开) . MSW dataset was used to verify the transferability of our hypothesis to more generic textual domains.

3. Baselines

- Sumy https://pypi.org/project/sumy/

- LSA + clustering

- SummaRuNNer https://github.com/amagooda/SummaRuNNer_coattention

- SiATL

4. Models

5. Experiments

Experimental designs address the following hypotheses:

Hypothesis 1 (H1) : Attending to the beginning of a discussion thread, would help extractive summarization models to select more salient sentences.(关注一个讨论线程的开始,将有助于提取摘要模型选择更突出的句子。)

Hypothesis 2 (H2) : Non-auto-regressive models such as SiATL might be more suitable for thread discussion summarization, compared to auto-regressive models such as SummaRuNNer.(与自动回归模型(如SummaRuNNer)相比,非自回归模型(如SiATL)可能更适合用于线程讨论摘要。)

Hypothesis 3 (H3) : Adding additional features, such as contextual embeddings (e.g. BERT) and keywords can give summarization models a boost in performance. (添加额外的特性,例如上下文嵌入(例如BERT)和关键字,可以提高总结模型的性能。)

Hypothesis 4 (H4) : Attend to the beginning is transferable to different forms of text other than discussion threads.(注意开头可以转移到除讨论线程之外的其他文本形式。)

- 本文作者: 鱼咸滚酱

- 本文链接: https://github.com/WangMeng2018/WangMeng2018.github.io/tree/master/2020/03/04/Report-Attend-to-the-beginning-A-study-on-using-bidirectional-attention-for-extractive-summarization/

- 版权声明: 本博客所有文章除特别声明外,均采用 Apache License 2.0 许可协议。转载请注明出处!