Discriminative Adversarial Search for Abstractive Summarization

Abstract

We introduce a novel approach for sequence decoding, Discriminative Adversarial Search (DAS), which has the desirable properties of alleviating the effects of exposure bias without requiring external metrics.(一种新的序列解码方法——判别对抗性搜索(DAS),该方法在不需要外部指标的情况下,具有减轻暴露偏差影响的优点。)

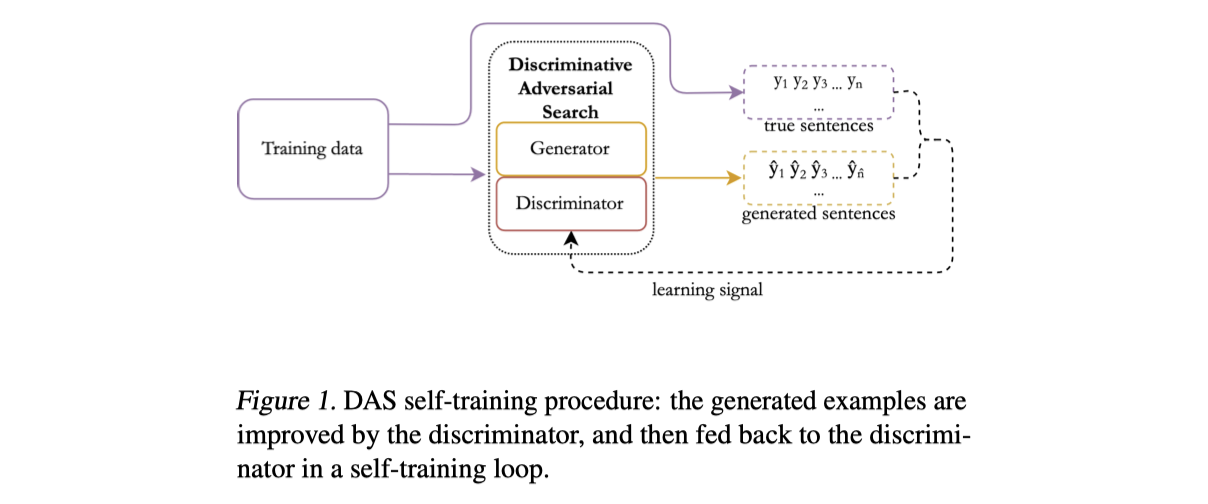

A discriminator is used to improve the generator, our method differs from GANs in that the generator parameters are not updated at training time and the discriminator is only used to drive sequence generation at inference time.(使用一个鉴别器来改进生成器,不同于GANs的是,在训练时不更新生成器的参数,在推理时仅使用鉴别器来驱动序列生成。)

1. Introduction

A Teacher Forcing strategy is applied during training: ground-truth tokens are sequentially fed into the model to predict the next token. Conversely, at inference time, ground-truth tokens are not available: the model can only have access to its previous outputs. Such mismatch is referenced to as exposure bias: as mistakes accumulate, this can lead to a divergence from the distribution seen at training time, resulting in poor generation outputs.

To tackle exposure bias, Generative Adversarial Networks (GANs) represent a natural alternative to the proposed approaches: rather than learning a specific metric, the model learns to generate text that a discriminator can not differentiate from human-produced content.

In GANs, the discriminator is used to improve the generator and is dropped at inference time. DAS do not modify the generator parameters at training time, and use the discriminator at inference time to drive the generation towards human-like textual content.

Contributions:

- propose Discriminative Adversarial Search (DAS), a novel sequence decoding approach that allows to alleviate the effects of exposure bias and to optimize on the data distribution itself rather than for external metrics;

- apply DAS to the abstractive summarization task: even a naively discriminated beam – i.e. without the self-retraining procedure, improves over the state-of-the-art for various metrics;

- report further significant improvements when applying discriminator retraining.

3. Datasets

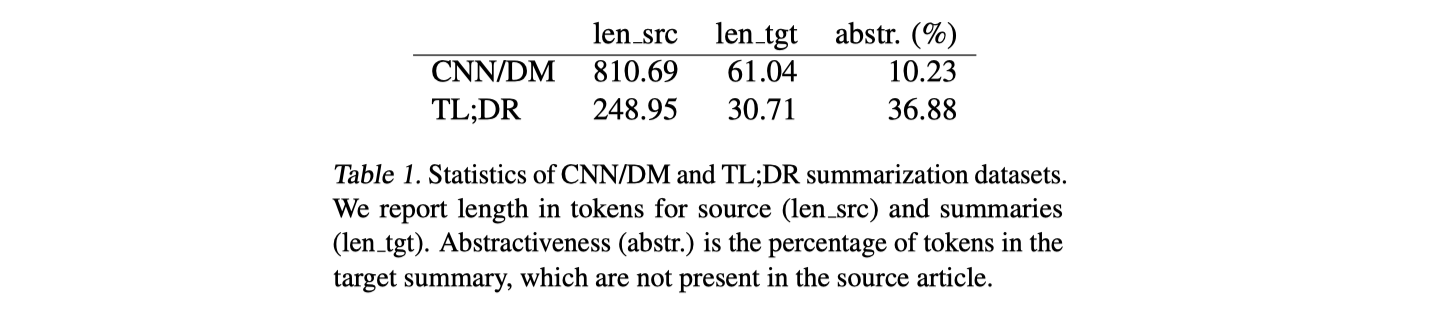

Two datasets:

CNN/DM

TL;DR (https://zenodo.org/record/1168855)

Choose this dataset for two main reasons: first, its data is relatively outof-domain if compared to the samples in CNN/DM; second, its characteristics are also quite different: compared to CNN/DM, the TL;DR summaries are twice shorter and three times more abstractive.

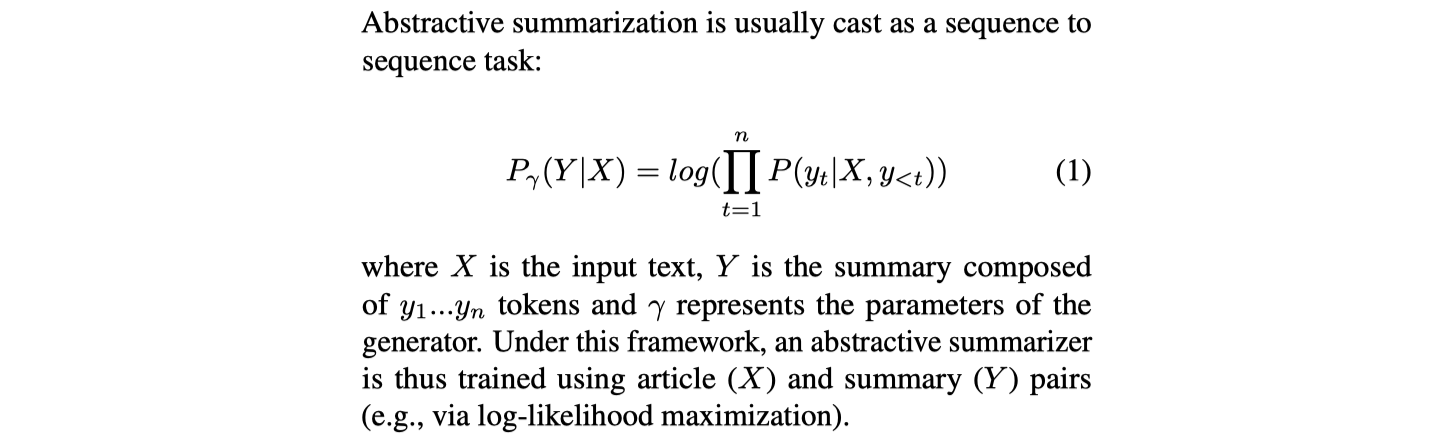

4. Discriminative Adversarial Search

The proposed model is composed of a generator G (described in 4.1) coupled with a sequential discriminator D (described in 4.2): at inference time, for every new token generated by G, the score and the label assigned by D is used to refine the probabilities, within a beam search, to select the top candidate sequences.(模型由一个生成器G和序列判别器D组成:在推断时,对于G生成的每个新token,D分配分数和标记来调整该token在beam搜索中的概率。)

4.1. Generator

4.2. Discriminator

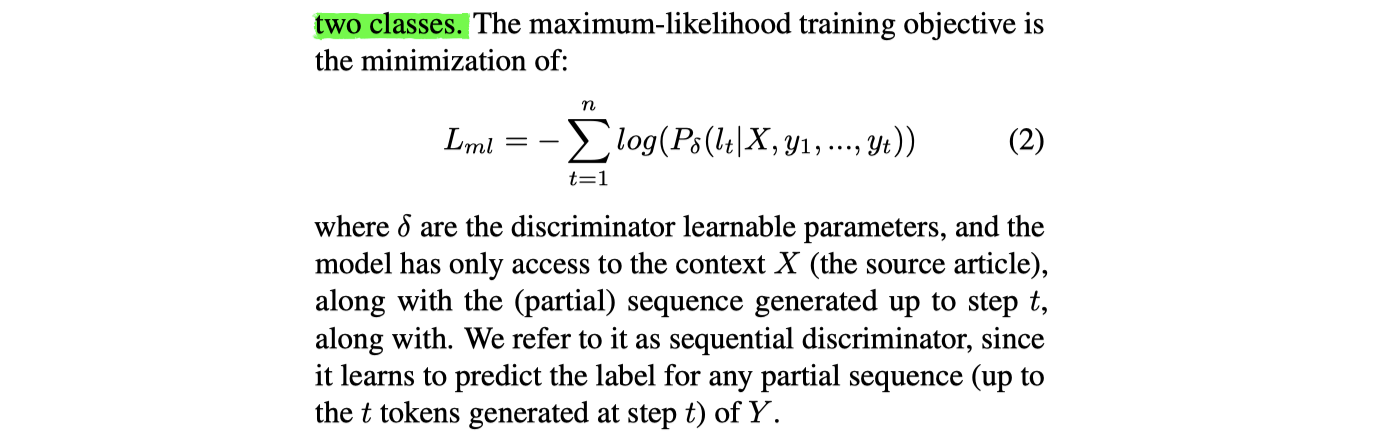

The objective of the discriminator is to label a sequence Y as being human-produced or machine-generated. At each generation step, the discriminator, instead of predicting the next token among the entire vocabulary V , generates a label among two classes.

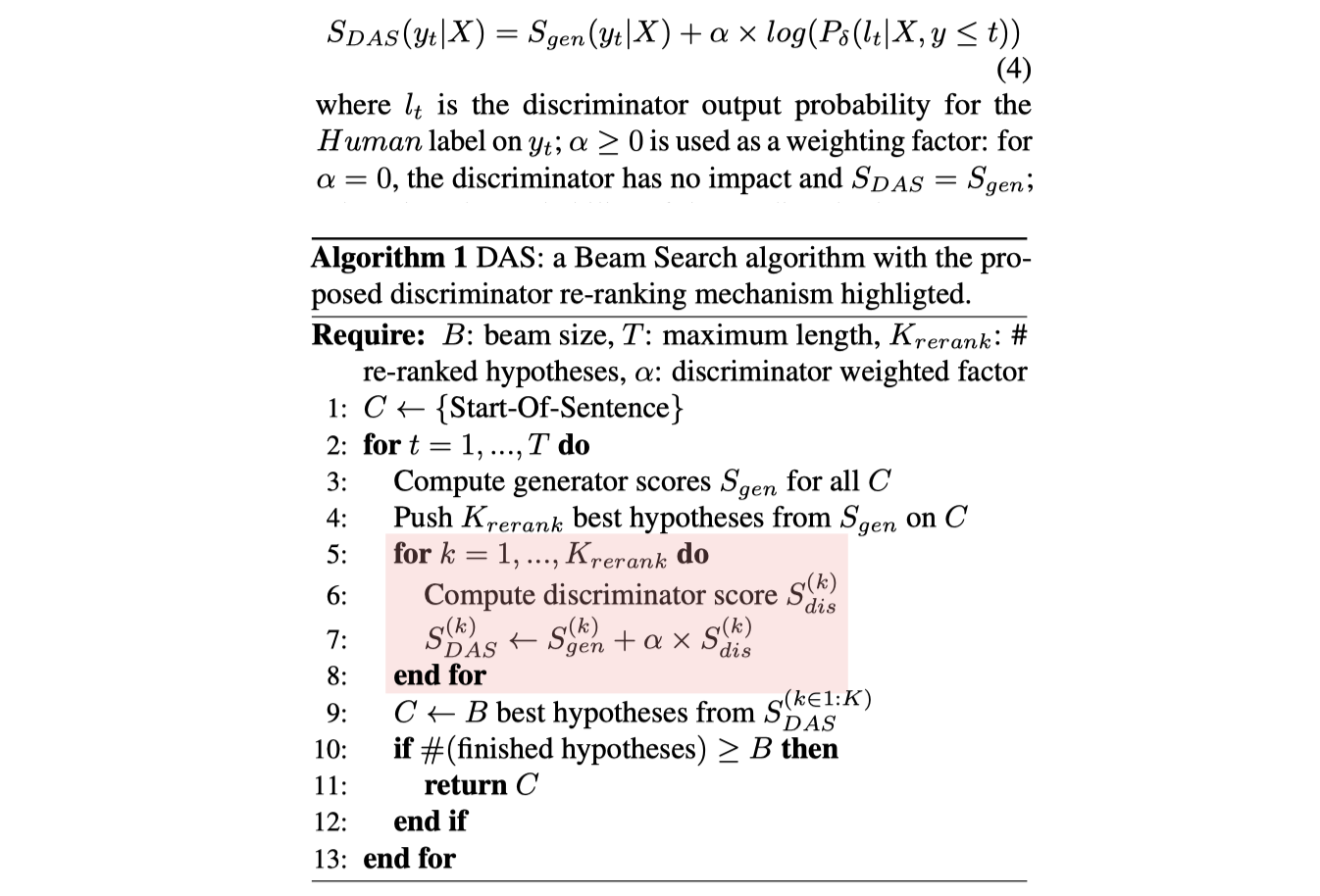

4.3. Discriminative Beam Reranking

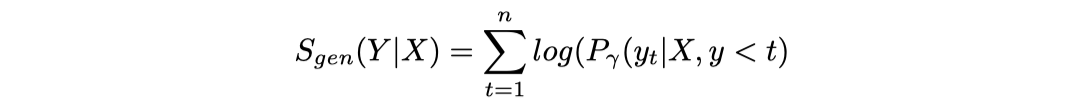

At each generation step y t , the generator assigns a probability to each of the tokens in its vocabulary V . Use Beam Search to maximize the probability.

We propose a new score $S_{DAS}$ to refine the score $S_{gen}$ during the beam search w.r.t. the log probability of the discriminator.

4.4. Retraining the Discriminator

The discriminator can be fine-tuned using the outputs which were found improved via the re-ranking.

Resources

- https://github.com/microsoft/unilm#abstractive-summarization--cnn--daily-mail

- https://zenodo.org/record/1168855

- https://tldr.webis.de/

- 本文作者: 鱼咸滚酱

- 本文链接: https://github.com/WangMeng2018/WangMeng2018.github.io/tree/master/2020/03/05/Report-Discriminative-Adversarial-Search-for-Abstractive-Summarization/

- 版权声明: 本博客所有文章除特别声明外,均采用 Apache License 2.0 许可协议。转载请注明出处!