GameWikiSum: a Novel Large Multi-Document Summarization Dataset

Abstract

GameWikiSum: https://github.com/Diego999/GameWikiSum

Input documents consist of long professional video game reviews as well as references of their gameplay sections in Wikipedia pages. Both abstractive and extractive models can be trained on it.

1. Introduction

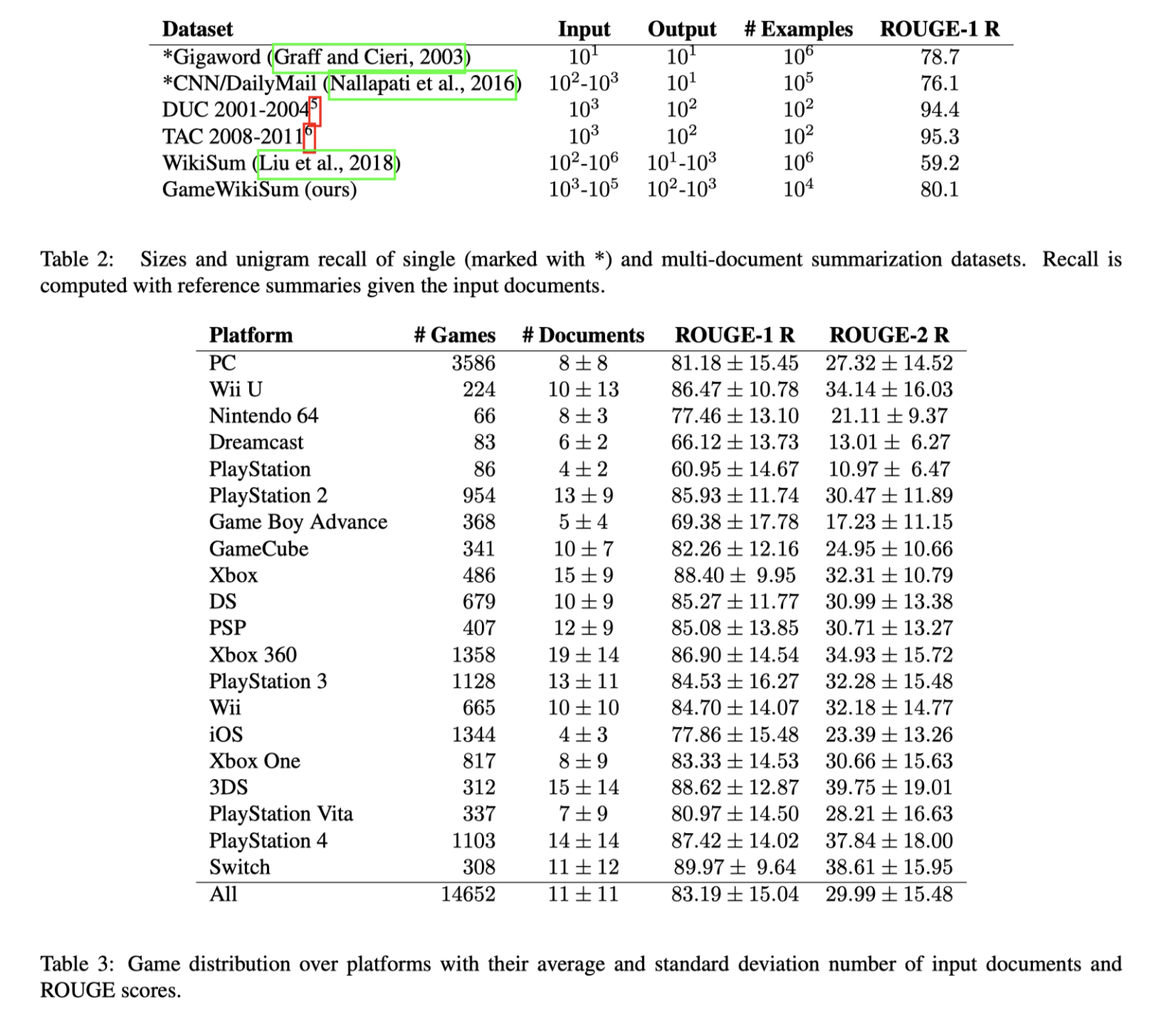

The dataset contains 14 652 samples, based on professional video game reviews obtained via Metacritic and gameplay sections from Wikipedia. Wikipedia pages are available in multiple languages, which opens up the possibility for multilingual multi-document summarization.

2. GameWikiSum

2.1. Dataset Creation

Reviewed aspects in video games include the gameplay, richness, and diversity of dialogues, or the soundtrack. Compared to usual reviews written by users, these are assumed to be of higher-quality and longer.

In order to match games with their respective Wikipedia pages, we use the game title as the query in the Wikipedia search engine and employ a set of heuristic rules.

2.2. Heuristic matching

Crawl approximately 265 000 professional reviews for around 72 000 games and 26 000 Wikipedia gameplay sections. Design some heuristics because there is no automatic mapping between a game to its Wikipedia page. The heuristics are the followings and applied in this order:

Exact title match: titles must match exactly;

Removing tags: when a game has the same name than its franchise, its Wikipedia page has a title similar to Game (year video game) or Game (video game);

Extension match: sometimes, a sequel or an extension is not listed in Wikipedia. In this case, we map it to the Wikipedia page of the original game.

We only keep games with at least one review and a matching Wikipedia page, containing a gameplay section.

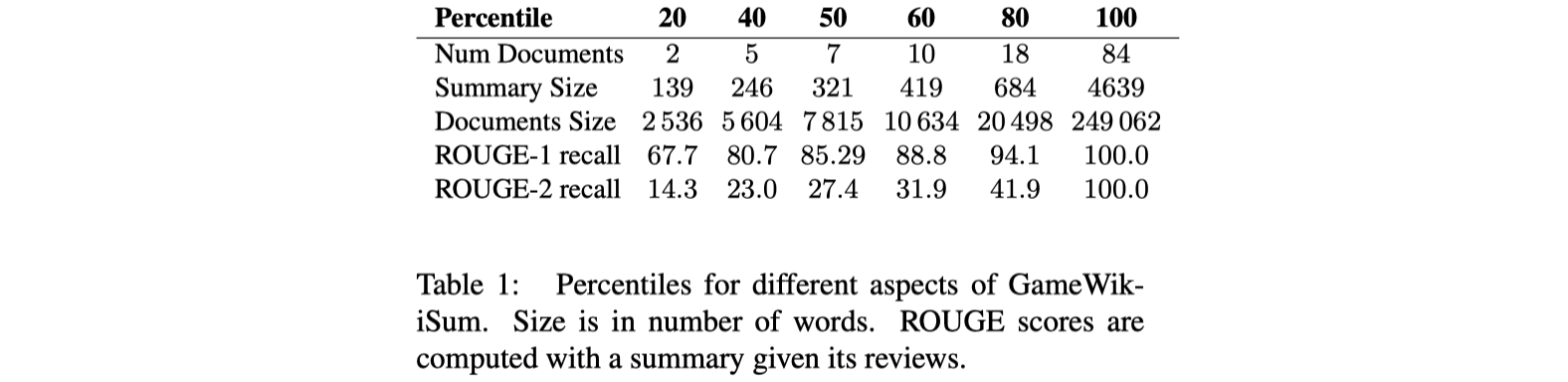

2.3. Descriptive Statistics

3. Experiments and Results

3.1. Evaluation Metric

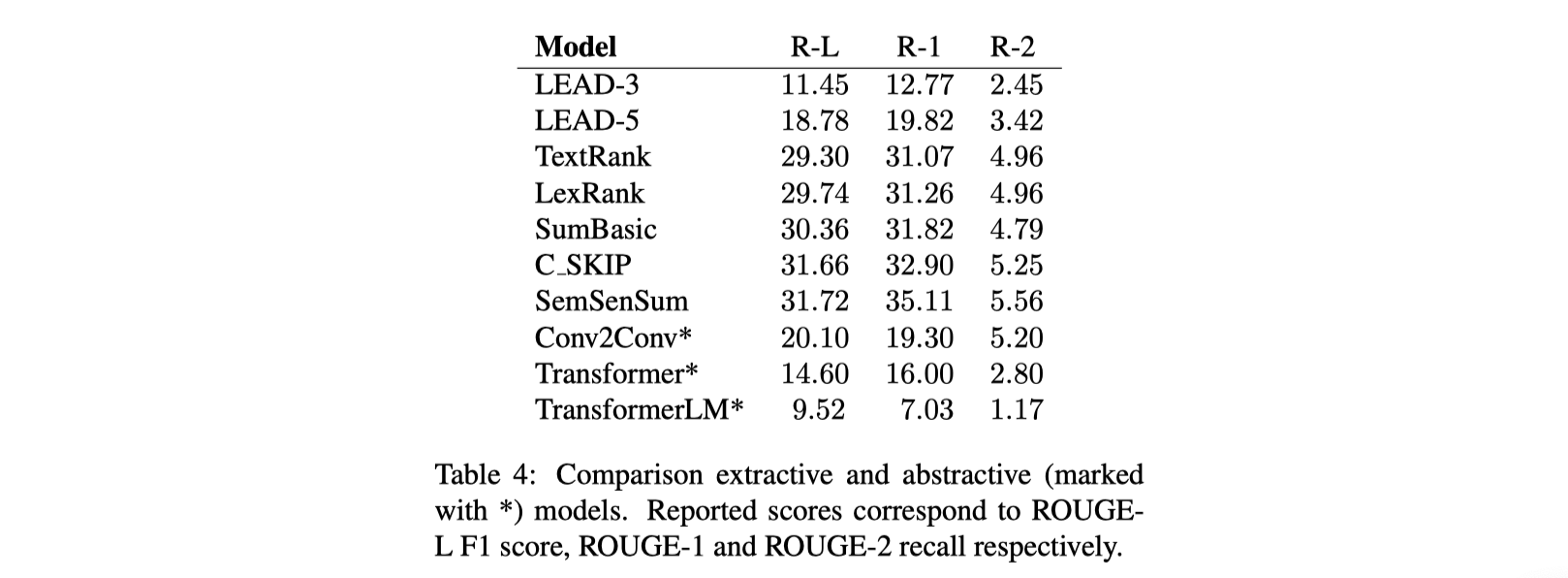

3.3. Results

- 本文作者: 鱼咸滚酱

- 本文链接: https://github.com/WangMeng2018/WangMeng2018.github.io/tree/master/2020/03/06/Report-GameWikiSum-a-Novel-Large-Multi-Document-Summarization-Dataset/

- 版权声明: 本博客所有文章除特别声明外,均采用 Apache License 2.0 许可协议。转载请注明出处!