Learning by Semantic Similarity Makes Abstractive Summarization Better

Abstract

One of the obstacles of abstractive summarization is the presence of various potentially correct predictions.

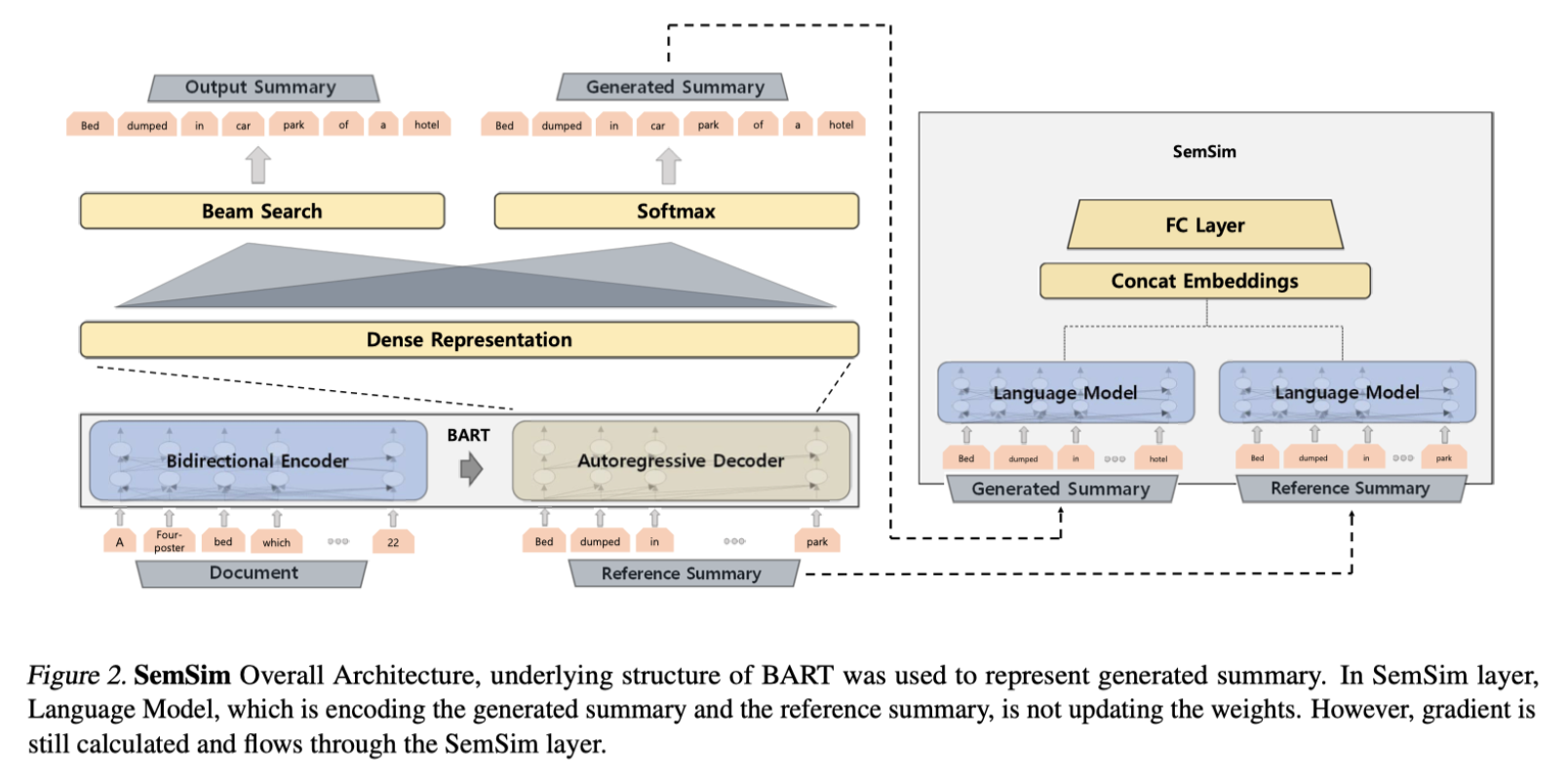

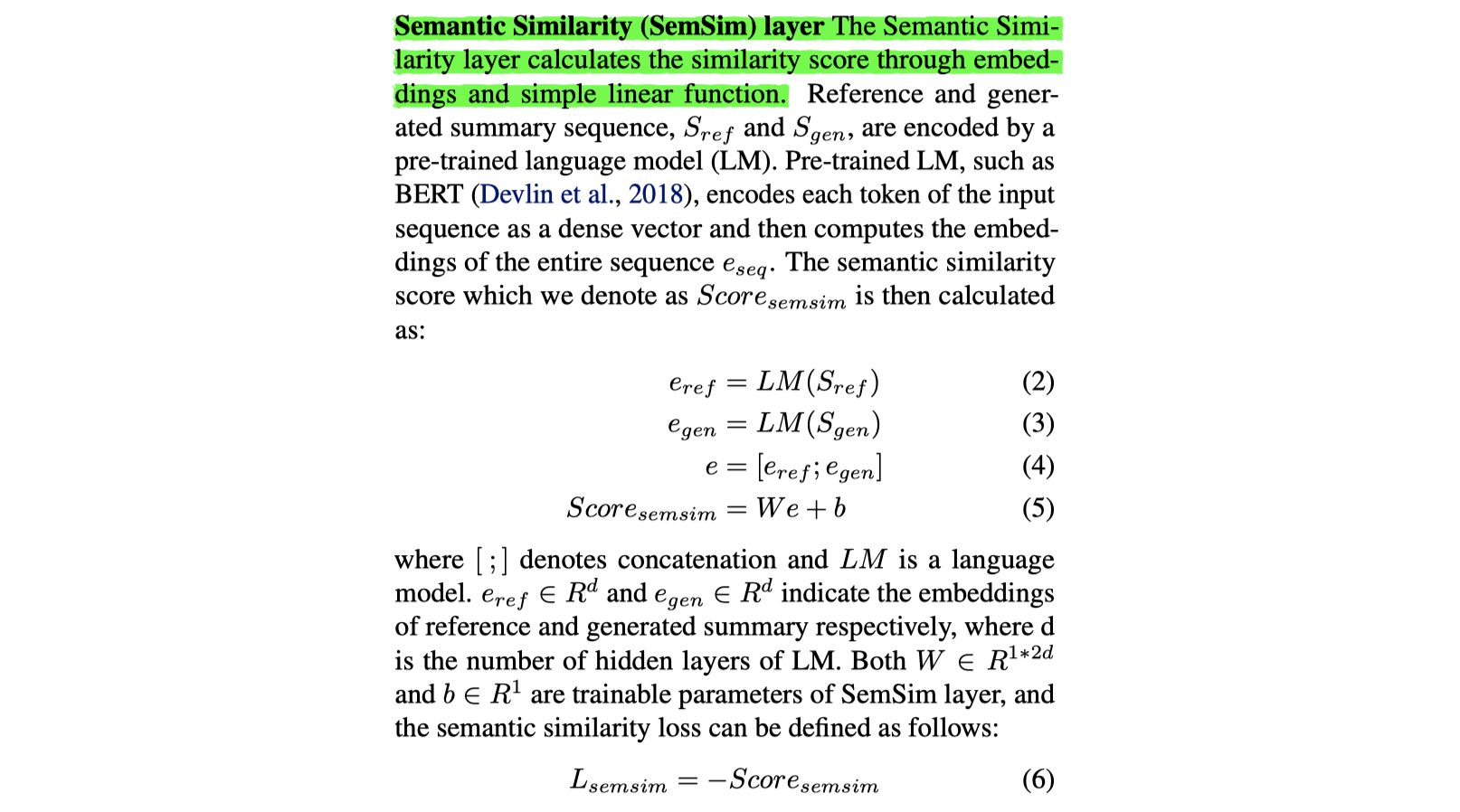

Semantic Similarity strategy: consider semantic meanings of generated summaries while training. Our training objective includes maximizing semantic similarity score which is calculated by an additional layer that estimates semantic similarity between generated summary and reference summary.

Code: https://github.com/icml-2020-nlp/semsim

Model

文章认为原来的最大似然目标函数不能处理多个参考摘要的问题,反而会引入噪声,所以使用生成摘要和参考摘要的语义相似度作为目标函数。语义相似度的计算方法很简单,但是很难训练,还需要和最大似然求和,作为最终的目标函数才能加快训练。看实验分析,ROUGE指标的确有提升。

总的来说,该论文的创新度不高。

- 本文作者: 鱼咸滚酱

- 本文链接: https://github.com/WangMeng2018/WangMeng2018.github.io/tree/master/2020/03/11/Report-Learning-by-Semantic-Similarity-Makes-Abstractive-Summarization-Better/

- 版权声明: 本博客所有文章除特别声明外,均采用 Apache License 2.0 许可协议。转载请注明出处!